Artificial intelligence is quickly becoming part of the asset manager’s toolkit — from generating investment research to automating client reports. But like an overconfident intern, sometimes AI “fills in the blanks” with information that sounds right… but isn’t.This phenomenon, known as an AI hallucination, can turn a useful analytical tool into a source of subtle (and costly) errors. In finance, where accuracy underpins every decision, understanding how and why hallucinations happen is not just good practice — it’s essential risk management.

In the context of generative AI, a hallucination occurs when a model produces information that is factually incorrect, irrelevant, or entirely fabricated — but presents it with confidence.

For example:

These errors don’t always look like mistakes. They can be highly polished and persuasive, making them easy to miss without rigorous verification..

Hallucinations are not bugs in the sense of software glitches — they’re a natural side effect of how large language models (LLMs) and some machine learning systems work:

Overgeneralization – Models sometimes apply learned patterns from one domain (e.g., historical equity trends) to situations where they don’t belong (e.g., emerging market bonds).

In other industries, an AI hallucination might cause embarrassment or a minor inconvenience. In asset management, the stakes are higher:

When portfolio managers, analysts, and client relationship teams rely on AI outputs without adequate verification, hallucinations can quietly slip into decision-making pipelines.

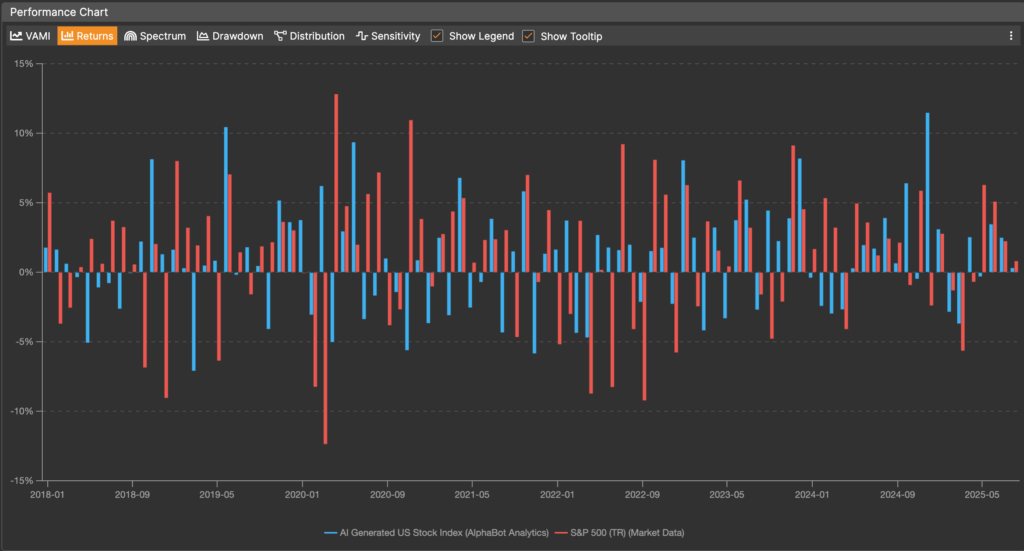

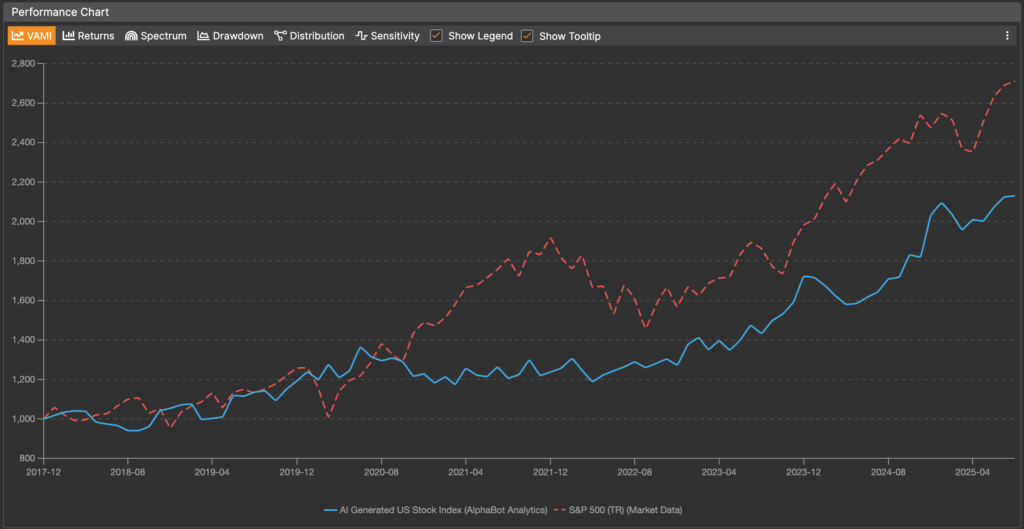

To illustrate how simplistic use of AI output can lead to wrong data, we have asked it to “Generate sample monthly returns for a broad US stock market index from 2010 to 2025”. The produced returns were uploaded to AlphaBot and compared with the S&P 500 index.

The resulting series failed to represent any significant features of the stock market dynamics over the last few years. It totally missed COVID impact and only generally resembled the overall bull market trend that followed, without any notable features. In fact, when we looked at the statistics, the correlation of the AI generated stock index with the S&P 500 was 0.035, that is the returns are totally uncorrelated!

Needless to say that using such data in subsequent analysis and making conclusions (and maybe even forming advice from it!) is very dangerous and misleading.

Some readers may point out that our prompt was very simplistic and could be fine-tuned to get the resulting output more accurately represent the actual market index. And that is exactly our point – yes, AI can be fine tuned, but without the user understanding that, knowing what to look for, and having a method of improving it, it is just a random number generator.

AI is a tool that needs to be mastered, it is not a magic solution.

Naively speaking it reminds me of a genie let out of a bottle – it can grant your wish, but you have to be extremely careful in making sure what you ask is exactly what you want, as the consequences of misinterpretation will be dire.

While hallucinations can’t be eliminated entirely, you can reduce the risk with structured safeguards:

AI hallucinations are not a reason to avoid the technology — they’re a reason to use it wisely. In asset management, where every decimal point counts, treating AI outputs as a starting point rather than a final verdict is the safest path forward.

By pairing AI’s speed with human judgment and robust data governance, you can enjoy its productivity benefits without letting your portfolio fall for a mirage.

Engage with Us

Are you building diversified multi-asset class portfolios or clients? Want to see how AIphaBot and collaboration tools can help? Get in touch for a live walkthrough or trial access

(c) AlphaBot 2025